Operating System Concept: Memory Management - Main Memory

Background

- Our program must be loaded into memory before the CPU fetches instructions into registers.

- A program needs somewhere to store data in memory. How does a program know the memory address it needs to access?

- The number of programs we can put into memory decides the degree of multiprogramming. Is there any way to improve the memory utilization?

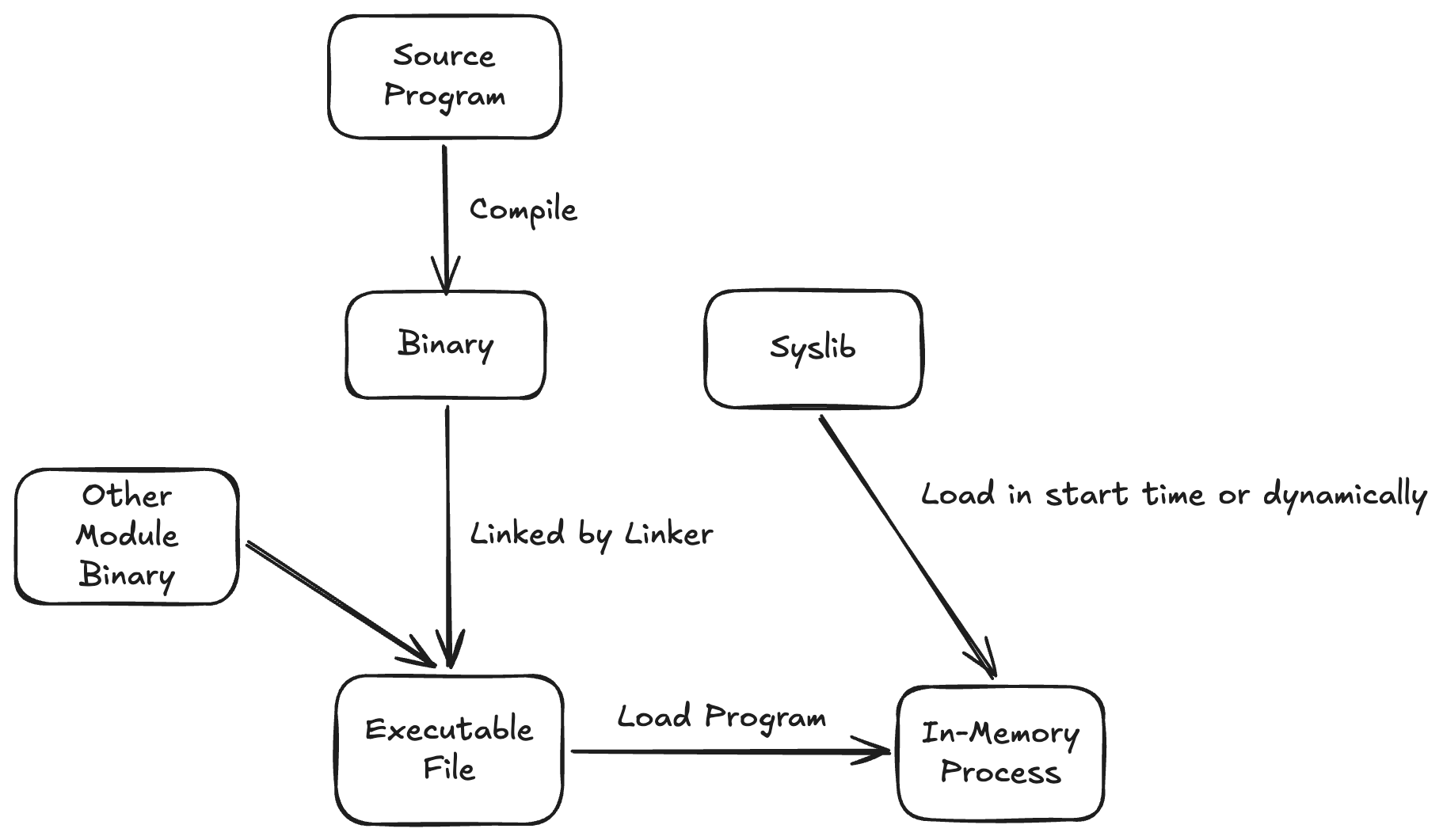

Control Flow of Program Load into Memory

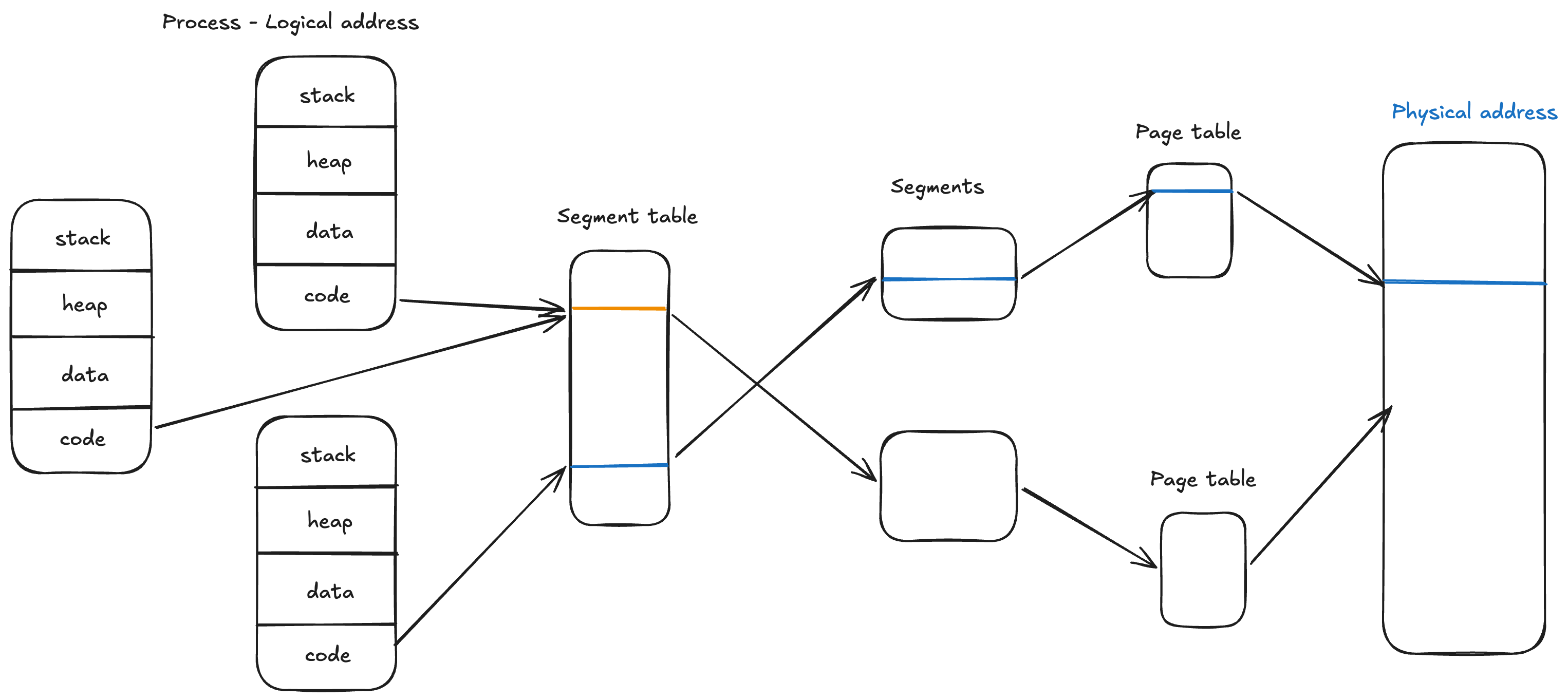

Segmentation

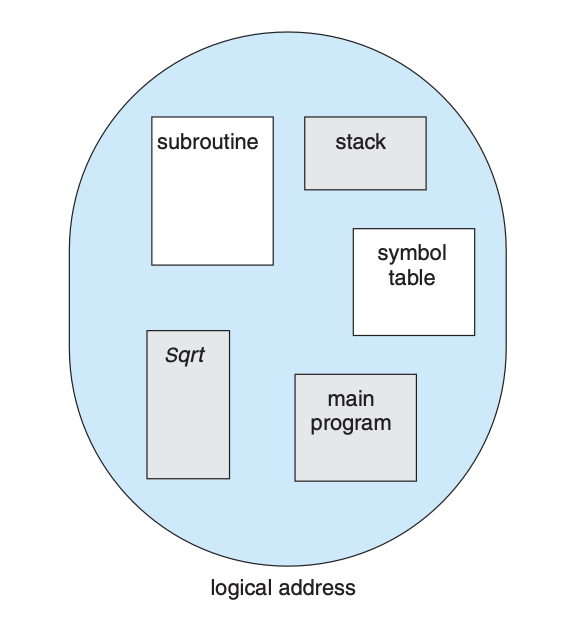

Users who write programs do not really care where the machine code or data is located in physical memory.

In a programmer’s view, they focus on the functions, classes, variables, and data they allocated.

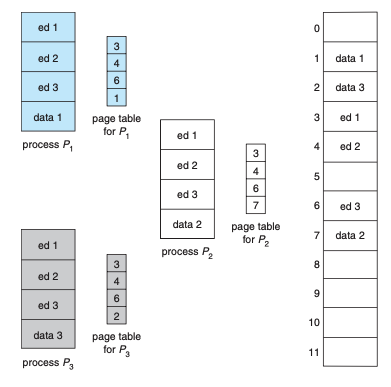

Segmentation is a memory layout for ease of management under a programmer’s view, which divides memory into segments such as stack, heap, code, and static data.

The same segment from different programs can be shared in physical memory to save memory space.

Paging

Memory Allocation

If we load our program into a contiguous block of physical addresses, 2 problems will happen:

- All of our programs need to decide the physical address at compile time.

- It is hard to find a contiguous address hole large enough to fit the program size, especially if the program is large.

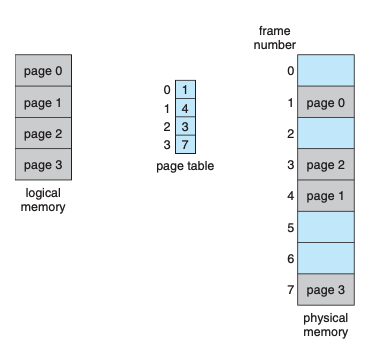

Paging is a technique to divide memory into small fixed-size frames, and then map the program onto these frames in physical memory to lift the restriction that the address space must be contiguous.

Page Table

Page table maps the logical address space into physical address space.

Fragmentation

Fragmentation describes wasted memory caused by partitioning physical memory into fixed-size frames.

Normally we choose 4KB as a frame size, the trade-off between choosing large or small frame size is:

- Too large frame size: Unused space inside a frame is wasted (internal fragmentation).

- Too small frame size: Requires more space for the page table, which typically must fit in contiguous memory.

TLB

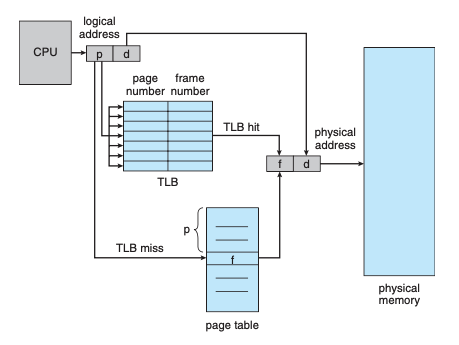

When using a page table, every memory access must go through the page table first, which may hurt runtime performance.

The TLB (Translation Lookaside Buffer) is a hardware cache inside the MMU that accelerates page-table lookups.

Shared Pages

One advantage of paging is sharing common code. Thus multiple processes are able to execute the same code at the same time.

Memory Protection

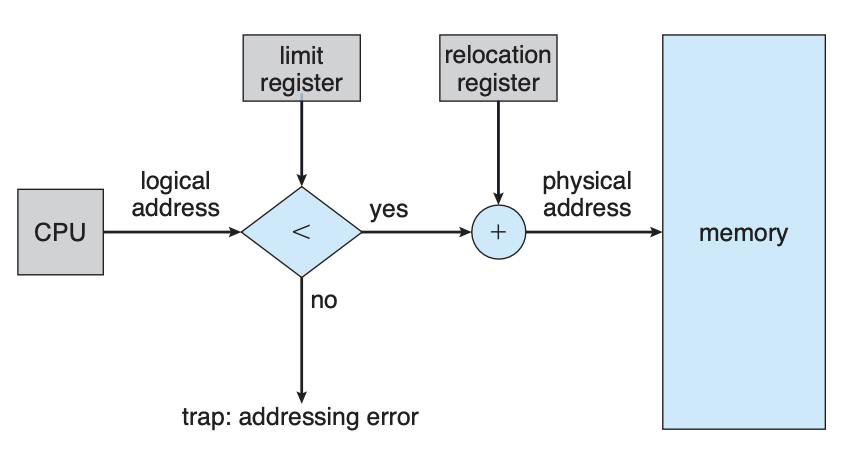

The goal of memory protection is to prevent processes from interfering with each other.

When a context switch happens, the dispatcher loads the relocation and limit registers. Address checking happens in the logical address space. Only valid logical addresses can be translated to physical addresses and executed by the CPU.

Combining Segmentation and Paging

$cd ~

$cd ~